After several months of exploring various summation and acceleration methods, it is time to synthesize these tools before we apply them to one of their most important domains: Perturbation Theory.

When a power series has a small or even zero radius of convergence, or converges too slowly to be numerically useful, we require more than standard arithmetic. Here is a recap of the methods we have covered and how they bridge the gap between formal series and numerical values.

Summation Methods: Taming Divergence

- Borel and Borel–Écalle: By using the Borel transform, we transform factorially growing coefficients into a convergent series in the Borel plane. The Borel–Écalle framework further allows us to handle singularities via resurgence theory, providing a unique “resummed” value even for non-Borel-summable series.

- Euler and Zeta summation: These methods assign finite values to divergent sums by analytic continuation. While Euler summation is ideal for alternating series, Zeta summation provides a powerful way to handle the infinite sums that frequently appear in quantum vacuum energy calculations.

- Generic summation: Beyond these classical approaches, we have also explored the idea of generic summation, which provides a unifying framework for assigning values to divergent or slowly convergent series.

Acceleration Techniques: Enhancing Convergence

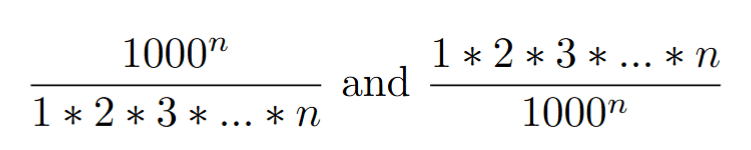

- Shanks Transformation and Padé Approximants: These nonlinear transformations (often related to continued fractions) excel at capturing the behavior of functions beyond their radius of convergence, particularly when poles are present.

- Richardson Extrapolation: A fundamental tool for numerical analysis that cancels out the leading error terms, allowing us to estimate the limit of a sequence Sn with much higher precision from only a few terms.

An important consistency check underlies all these techniques: whenever different summation or acceleration methods apply to the same series, they agree on a common value. This convergence of independent approaches is not accidental, it reflects the fact that these methods capture an underlying analytic object beyond the formal series itself. When they work, they do not merely assign a value: they reveal a coherent extension of the function that the original divergent expansion was hinting at.